Scheduler

Input parameters for the cluster

These parameters are located in input/omnia_config.yml.

Caution

Do not remove or comment any lines in the input/omnia_config.yml file.

Variables |

Details |

|---|---|

scheduler_type

|

Default value: |

mariadb_password

|

|

k8s_version

|

|

k8s_cni

|

|

k8s_pod_network_cidr

|

|

docker_username

|

|

docker_password

|

|

ansible_config_file_path

|

|

enable_omnia_nfs

|

|

omnia_usrhome_share

|

Default value: “/home/omnia-share” |

Note

- The

input/omnia_config.ymlfile is encrypted on the first run of the provision tool: To view the encrypted parameters:

ansible-vault view omnia_config.yml --vault-password-file .omnia_vault_key

To edit the encrypted parameters:

ansible-vault edit omnia_config.yml --vault-password-file .omnia_vault_key

Before you build clusters

Verify that all inventory files are updated.

If the target cluster requires more than 10 kubernetes nodes, use a docker enterprise account to avoid docker pull limits.

Verify that all nodes are assigned a group. Use the inventory as a reference.

The manager group should have exactly 1 manager node.

The compute group should have at least 1 node.

The login group is optional. If present, it should have exactly 1 node.

Users should also ensure that all repos are available on the target nodes running RHEL.

Note

The inventory file accepts both IPs and FQDNs as long as they can be resolved by DNS.

Nodes provisioned using the Omnia provision tool do not require a RedHat subscription to run

scheduler.ymlon RHEL target nodes.For RHEL target nodes not provisioned by Omnia, ensure that RedHat subscription is enabled on all target nodes. Every target node will require a RedHat subscription.

Features enabled by omnia.yml

Slurm: Once all the required parameters in omnia_config.yml are filled in,

omnia.ymlcan be used to set up slurm.Login Node (Additionally secure login node)

Kubernetes: Once all the required parameters in omnia_config.yml are filled in,

omnia.ymlcan be used to set up kubernetes.BeeGFS bolt on installation

NFS bolt on support

Building clusters

In the

input/omnia_config.ymlfile, provide the required details.

Note

Use the parameter

scheduler_typeininput/omnia_config.ymlto customize what schedulers are installed in the cluster.Without the login node, Slurm jobs can be scheduled only through the manager node.

Create an inventory file in the omnia folder. Add login node IP address under the manager node IP address under the [manager] group, compute node IP addresses under the [compute] group, and Login node IP under the [login] group,. Check out the sample inventory for more information.

Note

RedHat nodes that are not configured by Omnia need to have a valid subscription. To set up a subscription, click here.

Omnia creates a log file which is available at:

/var/log/omnia.log.If only Slurm is being installed on the cluster, docker credentials are not required.

To run

omnia.yml:ansible-playbook omnia.yml -i inventory

Note

To visualize the cluster (Slurm/Kubernetes) metrics on Grafana (On the control plane) during the run of

omnia.yml, add the parametersgrafana_usernameandgrafana_password(That isansible-playbook omnia.yml -i inventory -e grafana_username="" -e grafana_password=""). Alternatively, Grafana is not installed byomnia.ymlif it’s not available on the Control Plane.Having the same node in the manager and login groups in the inventory is not recommended by Omnia.

If you want to view or edit the

omnia_config.ymlfile, run the following command:ansible-vault view omnia_config.yml --vault-password-file .omnia_vault_key– To view the file.ansible-vault edit omnia_config.yml --vault-password-file .omnia_vault_key– To edit the file.

It is suggested that you use the ansible-vault view or edit commands and that you do not use the ansible-vault decrypt or encrypt commands. If you have used the ansible-vault decrypt or encrypt commands, provide 644 permission to

omnia_config.yml.

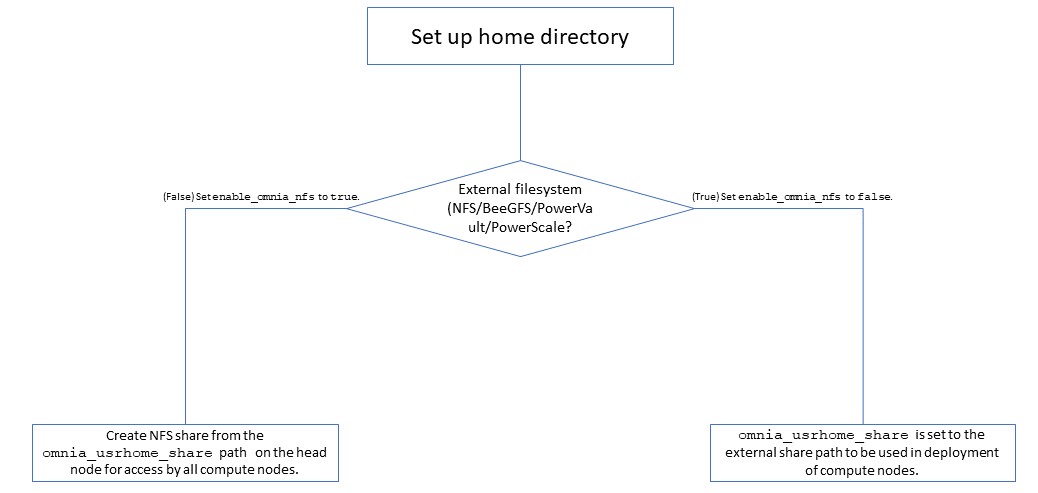

Setting up a shared home directory

- Users wanting to set up a shared home directory for the cluster can do it in one of two ways:

Using the head node as an NFS host: Set

enable_omnia_nfs(input/omnia_config.yml) to true and provide a share path which will be configured on all nodes inomnia_usrhome_share(input/omnia_config.yml). During the execution ofomnia.yml, the NFS share will be set up for access by all compute nodes.Using an external filesystem: Configure the external file storage using

storage.yml. Setenable_omnia_nfs(input/omnia_config.yml) to false and provide the external share path inomnia_usrhome_share(input/omnia_config.yml). Runomnia.ymlto configure access to the external share for deployments.

Kubernetes Roles

As part of setting up Kubernetes roles, omnia.yml handles the following tasks on the manager and compute nodes:

Docker is installed.

Kubernetes is installed.

Helm package manager is installed.

All required services are started (Such as kubelet).

Different operators are configured via Helm.

Prometheus is installed.

Slurm Roles

As part of setting up Slurm roles, omnia.yml handles the following tasks on the manager and compute nodes:

Slurm is installed.

All required services are started (Such as slurmd, slurmctld, slurmdbd).

Prometheus is installed to visualize slurm metrics.

Lua and Lmod are installed as slurm modules.

Slurm restd is set up.

Login node

If a login node is available and mentioned in the inventory file, the following tasks are executed:

Slurmd is installed.

All required configurations are made to

slurm.conffile to enable a slurm login node.

- Hostname requirements

The Hostname should not contain the following characters: , (comma), . (period) or _ (underscore). However, the domain name is allowed commas and periods.

The Hostname cannot start or end with a hyphen (-).

No upper case characters are allowed in the hostname.

The hostname cannot start with a number.

The hostname and the domain name (that is:

hostname00000x.domain.xxx) cumulatively cannot exceed 64 characters. For example, if thenode_nameprovided ininput/provision_config.ymlis ‘node’, and thedomain_nameprovided is ‘omnia.test’, Omnia will set the hostname of a target compute node to ‘node00001.omnia.test’. Omnia appends 6 digits to the hostname to individually name each target node.

Note

To enable the login node, ensure that the

logingroup in the inventory has the intended IP populated.

Slurm job based user access

To ensure security while running jobs on the cluster, users can be assigned permissions to access compute nodes only while their jobs are running. To enable the feature:

cd scheduler

ansible-playbook job_based_user_access.yml -i inventory

Note

The inventory queried in the above command is to be created by the user prior to running

omnia.ymlasscheduler.ymlis invoked byomnia.ymlOnly users added to the ‘slurm’ group can execute slurm jobs. To add users to the group, use the command:

usermod -a -G slurm <username>.

Running Slurm MPI jobs on clusters

To enhance the productivity of the cluster, Slurm allows users to run jobs in a parallel-computing architecture. This is used to efficiently utilize all available computing resources.

Note

Omnia does not install MPI packages by default. Users hoping to leverage the Slurm-based MPI execution feature are required to install the relevant packages from a source of their choosing. For information on setting up Intel OneAPI on the cluster, click here.

Ensure there is an NFS node on which to host slurm scripts to run.

Running jobs as individual users (and not as root) requires that passwordSSH be enabled between compute nodes for the user.

For Intel

To run an MPI job on an intel processor, set the following environmental variables on the head nodes or within the job script:

I_MPI_PMI_LIBRARY=/usr/lib64/pmix/

FI_PROVIDER=sockets(When InfiniBand network is not available, this variable needs to be set)

LD_LIBRARY_PATH(Use this variable to point to the location of the Intel/Python library folder. For example:$LD_LIBRARY_PATH:/mnt/jobs/intelpython/python3.9/envs/2022.2.1/lib/)

Note

For information on setting up Intel OneAPI on the cluster, click here.

For AMD

To run an MPI job on an AMD processor, set the following environmental variables on the head nodes or within the job script:

PATH(Use this variable to point to the location of the OpenMPI binary folder. For example:PATH=$PATH:/appshare/openmpi/bin)

LD_LIBRARY_PATH(Use this variable to point to the location of the OpenMPI library folder. For example:$LD_LIBRARY_PATH:/appshare/openmpi/lib)

OMPI_ALLOW_RUN_AS_ROOT=1(To run jobs as a root user, set this variable to1)

OMPI_ALLOW_RUN_AS_ROOT_CONFIRM=1(To run jobs as a root user, set this variable to1)

If you have any feedback about Omnia documentation, please reach out at omnia.readme@dell.com.